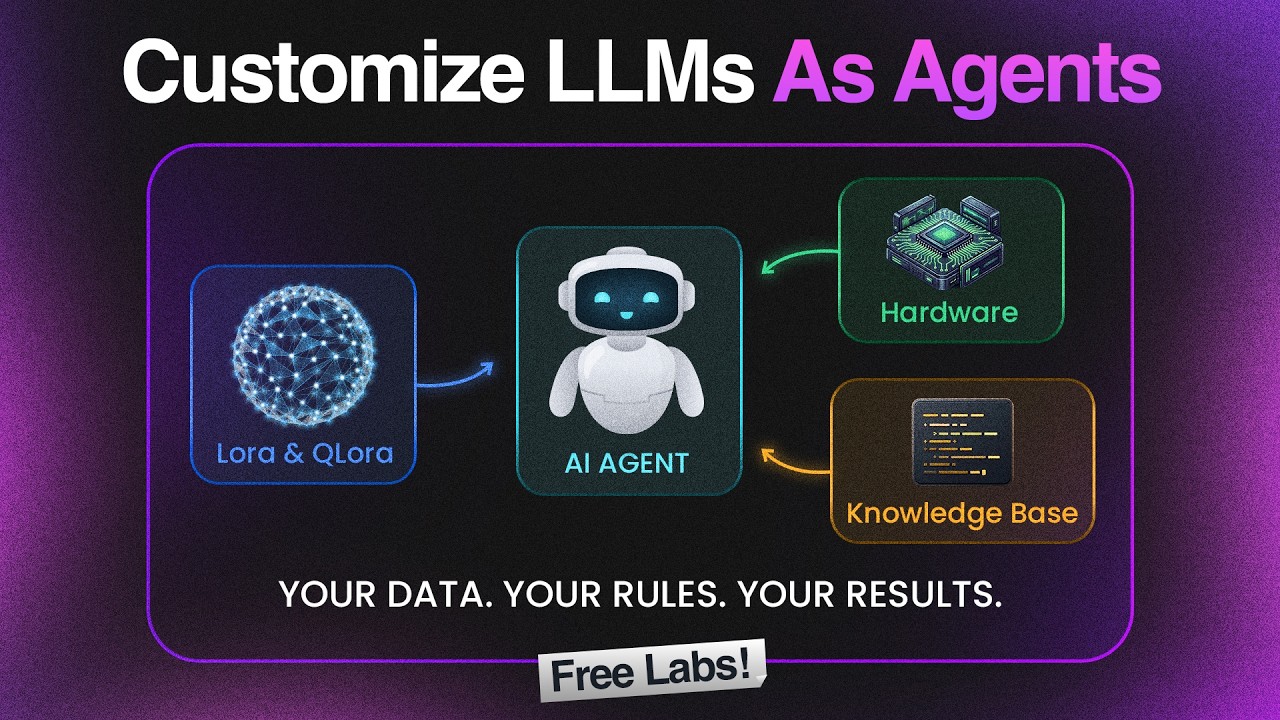

Fine-Tuning LLMs with LoRA and QLoRA (Free Labs)

? Customize LLMs & Agents for FREE — https://kode.wiki/3QcX45W

Most teams rely on prompt engineering. The ones building reliable production AI agents are fine-tuning their models.

This video walks you through the complete data preparation pipeline for fine-tuning LLMs using LoRA and QLoRA, inside a real hands-on KodeKloud lab with a live Secure Ops scenario.

No fluff. No theory overload. Just structured, hands-on learning starting from why your training data format matters, all the way to testing your dataset against a live LLM for alignment scoring.

─────────────────────────────────────────

? WHAT YOU'LL LEARN IN THIS VIDEO

─────────────────────────────────────────

✅ Why fine-tuning beats prompt engineering for enterprise AI agents

✅ How LoRA and QLoRA work and why they make fine-tuning viable on consumer GPUs

✅ Memory math breakdown: 1B, 7B, and 70B parameter models with QLoRA

✅ How to transform raw security logs into JSONL training data

? FREE HANDS-ON LAB INCLUDED — https://kode.wiki/3QcX45W

Practice everything in a real sandbox environment with no local setup, no credit card, no surprises.

GPU environment, dependencies, and all lab tasks are already configured and ready to go.

⏱️ TIMESTAMPS

00:00 – Introduction: Why Fine-Tuning Beats Prompt Engineering

00:38 – Hardware Requirements:

01:04 – LoRA and QLoRA Explained

02:10 – Training Data Requirements

03:31 – Lab Intro - Customize LLMs & Agents

04:54 – Task 0: Environment Setup

05:18 – Task 1: Why Data Format Matters

06:14 – Task 2: Log Transformation

07:38 – Task 3: Agent Persona Training Data

08:50 – Task 4: Classification Dataset

09:41 – Task 5: Data Quality Validation

10:33 – Task 6: Verify with LLM Inference

11:38 – Key Takeaways

#LLMFineTuning #QLoRA #LoRA #AIAgent #MachineLearning #LargeLanguageModels #DevOps #KodeKloud #AITraining #FineTuneGPT #MLOps #AIEngineer #DataPreparation #HandsOnLab #CloudAI #OpenAI #DeepLearning #GenerativeAI #AIDevOps #LLMTraining #AITutorial #LearnAI #PromptEngineering

Most teams rely on prompt engineering. The ones building reliable production AI agents are fine-tuning their models.

This video walks you through the complete data preparation pipeline for fine-tuning LLMs using LoRA and QLoRA, inside a real hands-on KodeKloud lab with a live Secure Ops scenario.

No fluff. No theory overload. Just structured, hands-on learning starting from why your training data format matters, all the way to testing your dataset against a live LLM for alignment scoring.

─────────────────────────────────────────

? WHAT YOU'LL LEARN IN THIS VIDEO

─────────────────────────────────────────

✅ Why fine-tuning beats prompt engineering for enterprise AI agents

✅ How LoRA and QLoRA work and why they make fine-tuning viable on consumer GPUs

✅ Memory math breakdown: 1B, 7B, and 70B parameter models with QLoRA

✅ How to transform raw security logs into JSONL training data

? FREE HANDS-ON LAB INCLUDED — https://kode.wiki/3QcX45W

Practice everything in a real sandbox environment with no local setup, no credit card, no surprises.

GPU environment, dependencies, and all lab tasks are already configured and ready to go.

⏱️ TIMESTAMPS

00:00 – Introduction: Why Fine-Tuning Beats Prompt Engineering

00:38 – Hardware Requirements:

01:04 – LoRA and QLoRA Explained

02:10 – Training Data Requirements

03:31 – Lab Intro - Customize LLMs & Agents

04:54 – Task 0: Environment Setup

05:18 – Task 1: Why Data Format Matters

06:14 – Task 2: Log Transformation

07:38 – Task 3: Agent Persona Training Data

08:50 – Task 4: Classification Dataset

09:41 – Task 5: Data Quality Validation

10:33 – Task 6: Verify with LLM Inference

11:38 – Key Takeaways

#LLMFineTuning #QLoRA #LoRA #AIAgent #MachineLearning #LargeLanguageModels #DevOps #KodeKloud #AITraining #FineTuneGPT #MLOps #AIEngineer #DataPreparation #HandsOnLab #CloudAI #OpenAI #DeepLearning #GenerativeAI #AIDevOps #LLMTraining #AITutorial #LearnAI #PromptEngineering

KodeKloud

...