GCP Data Engineer Question 12

GCP Dataflow: Solving Late-Arriving Data! ? #shorts

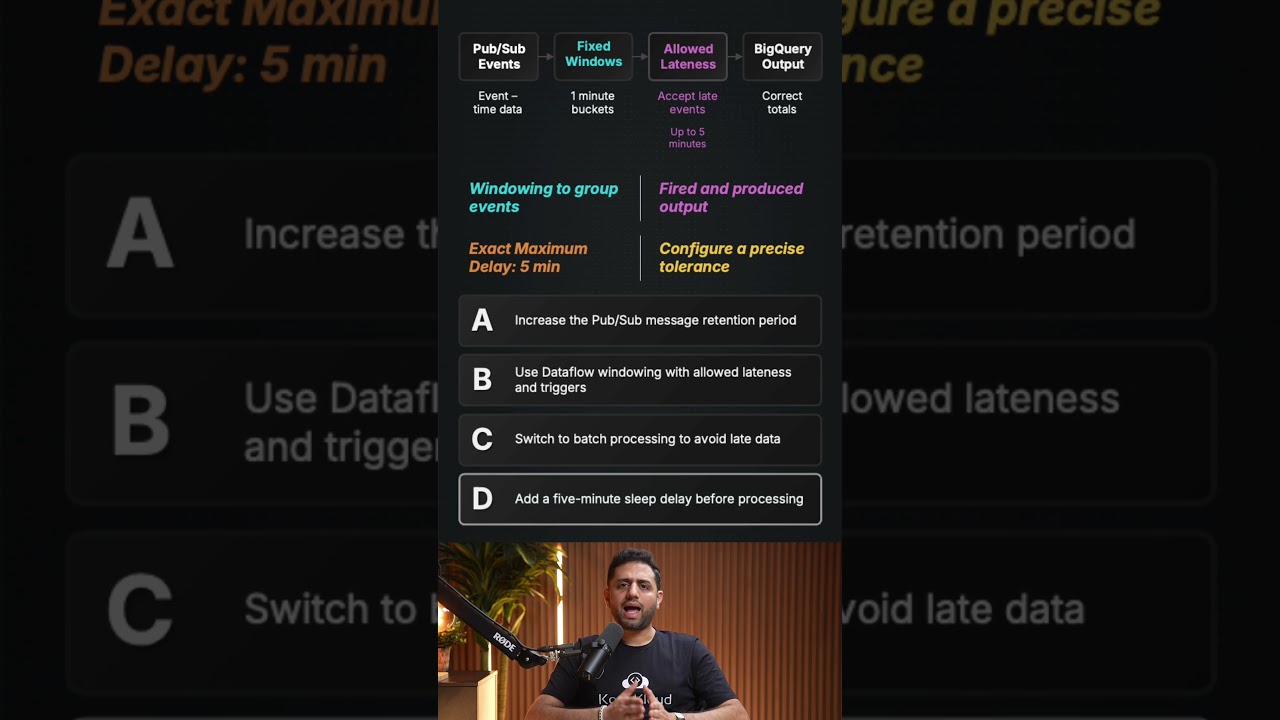

To accurately process data that arrives up to five minutes late in a streaming pipeline, you must implement Allowed Lateness combined with Triggers. By configuring a five-minute allowed lateness window, Dataflow keeps the state for your one-minute windows active even after they ""close,"" ensuring late messages are correctly bucketed and a revised total is emitted. This approach is far superior to irrelevant fixes like Pub/Sub message retention; which only manages queue duration; or adding manual sleep delays, which would artificially lag the entire pipeline without solving the underlying windowing logic. For the GCP exam, reconciling late streaming data always requires this specific combination of windowing tolerance and triggering strategies to maintain data integrity.

#GCP #Dataflow #DataEngineering #StreamingData #ApacheBeam #BigData #GCPCertification #CloudComputing #PubSub #Windowing #TechTips #DataArchitecture

To accurately process data that arrives up to five minutes late in a streaming pipeline, you must implement Allowed Lateness combined with Triggers. By configuring a five-minute allowed lateness window, Dataflow keeps the state for your one-minute windows active even after they ""close,"" ensuring late messages are correctly bucketed and a revised total is emitted. This approach is far superior to irrelevant fixes like Pub/Sub message retention; which only manages queue duration; or adding manual sleep delays, which would artificially lag the entire pipeline without solving the underlying windowing logic. For the GCP exam, reconciling late streaming data always requires this specific combination of windowing tolerance and triggering strategies to maintain data integrity.

#GCP #Dataflow #DataEngineering #StreamingData #ApacheBeam #BigData #GCPCertification #CloudComputing #PubSub #Windowing #TechTips #DataArchitecture

KodeKloud

...